Temperature sensor: TMP36, DHT22, and 10K Thermistor

Here’s a quick comparison of 3 different “low cost” temperature sensors:

- TMP36 at $2.

- 10K Precision Epoxy Thermistor – 3950 NTC at $4.

- DHT22 at $12.50. This one also contains a humidity sensor, so that’s why it’s more expensive than the other two.

This is how accurate they each claim to be:

| Model | Accuracy in °C | Accuracy in °F |

|---|---|---|

| TMP36 | ±2.00°C | ±3.6°F |

| Thermistor | ±0.45°C | ±0.8°F |

| DHT22 | ±0.50°C | ±0.9°F |

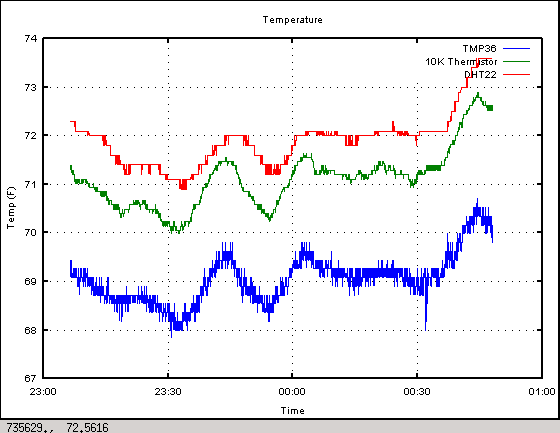

All the data below was collected with a BeagleBone Black which has a 12-bit ADC. The 10K thermistor was hooked up in a voltage divider configuration with a 1% 10K resistor. The DHT22 uses a thermistor inside as well, but has a digital output. Readings were taken every 3s over a 2 hour period.

The sensors are all positioned within 1/4″ of each other. The slow temperature drifts are from the house cooling and the furnace heating it back up.

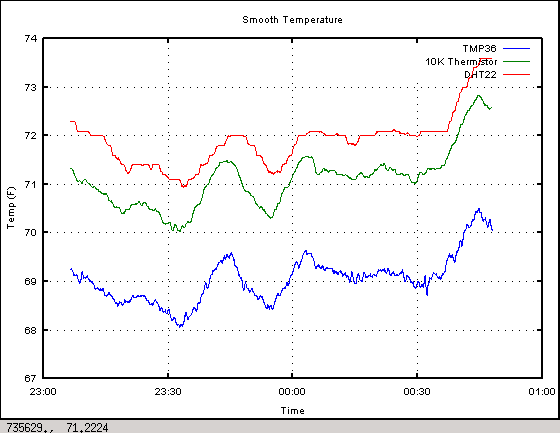

I used a running average filter with a window of 10 samples to clean up the noise a bit and these are the results.

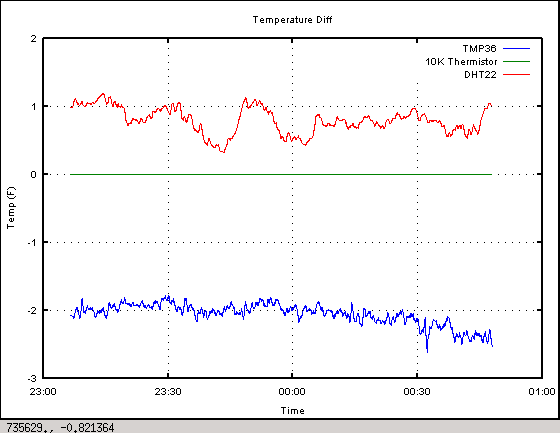

Finally, taking the 10K thermistor as ground truth, these are the differences observed. The TMP36 is consistently about 2°F lower than the thermistor, and the DHT22 is roughly 1°F higher even though it’s also using a thermistor inside. I don’t know how accurate the ADC inside the DHT22 is, so that might explain some of the difference.

In conclusion, when you hear someone say “I keep my thermostat at 67°F” and another guy responds “My wife would kill me if I did that. I keep mine at 73°F” they might both be keeping their temperature at 70°F. It just depends on what temperature sensor their thermostat is using, and how well it was calibrated.